[Tweet “Did you know that simple A/B testing helped Obama raise $60 million?”]

The Director of Analysis for the Obama 2008 campaign, Dan Siroker, ran an experiment in December 2007. He focussed on converting visitors through website optimization and A/B testing to raise funds. The end result? Obama ended up raising $60 million by running a simple experiment.

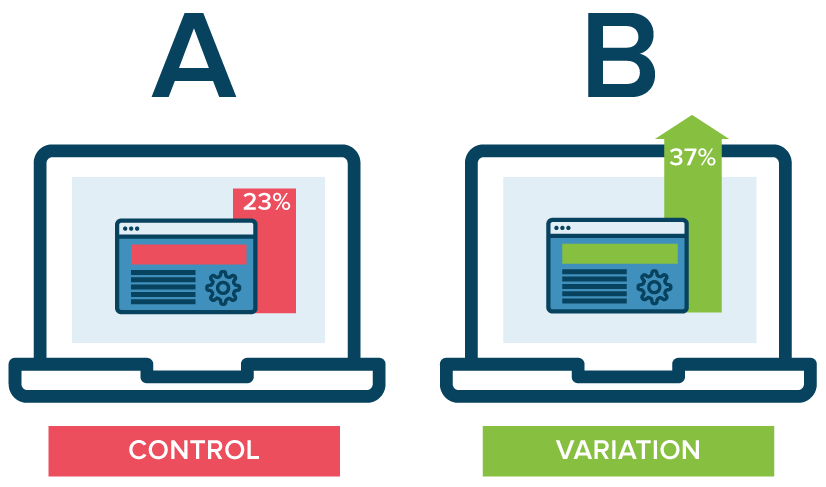

A/B testing (also called split testing) is a form of statistical analysis, used by marketers, in which you test 2 versions of the same thing to see which one performs better.

Image credits: Optimizely

For example, you might want to find out what make your visitors sign up on your website. You should create 2 test groups – A and B, with a difference as small as a single word in the ‘Call to Action (CTA)’ on your website, or you can create 2 completely different versions of your website.

The most important thing to remember when running A/B tests is to test one variable at a time only. Let’s say you want to finalise the perfect outro with a CTA for all your social media videos. You run Group A with a CTA – “Like our page for more” and publish it on a Wednesday at 10:00 AM and you run Group B with a CTA – “Follow us to get regular updates” and publish it on a Friday at 5:00 PM. Now, when you compare the 2 groups, what change do you give credit to? Is it the CTA that ensured one group outperforms the other? Or was it the fact that you chose to publish it on a different day and at a different time?

While businesses and marketers have embraced A/B testing for website, design, and static advertising elements, the world of A/B testing for video marketing has remained a mystery to most.

There are lots of elements in a video that you can run A/B tests for – video script, video length, media files used, color palette, music selection, outro, etc. Video ads, however, have an exhaustive list of things you can test. Keep in mind that the metric you should be tracking to determine successful online video advertising is the click through rate of your ad.

Variation A: Images

Variation B: Video clips

The best place for you to experiment with this is the first 3 seconds of your video ad. These 3 seconds determine whether the viewer is interested in watching the rest of your ad or not. The first 3 seconds also influence the action the viewer will perform after watching your ad.

Variation A: Videos before images

Variation B: Images before videos

Another experiment that you can run is by altering the sequence of your media files. Maybe after this you’ll come to a conclusion that viewers who see a video in their last scene perform an action with a higher probability than the viewers who see an image in their last scene.

You can run experiments to see which text animation on your outro scenes result in more clicks and influence the action the viewer takes after watching your ad.

You can convert a scroll in the news feed to a click on your ad by varying the call to action in your video ad. A subtle change from “Learn More” to “Know More” to “Visit Us” could make a big difference.

You can also experiment with text videos and no-text videos to see what drives your target audience to perform an action after they watch your ad. Once you compare both variations, select the best performer and set it as a standard for all your video ads that target this specific audience.

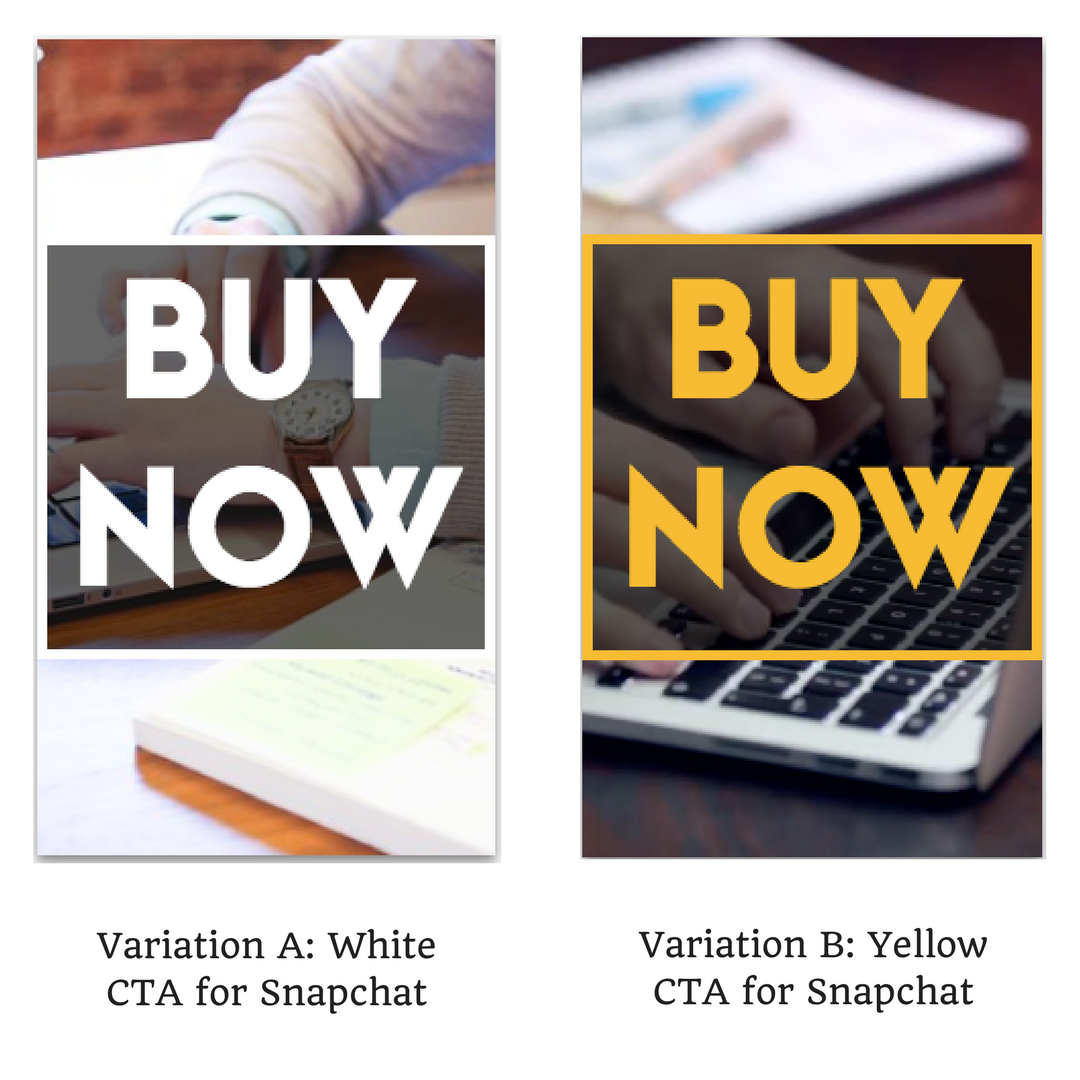

When selecting a color palette as a brand asset, it is important to test and compare different combinations. You can run experiments that vary the colors in the intro as well as the outro/CTA scene of your video ad.

Video ad variations for Snapchat

Here is an interesting read to help you select the right color palette for your videos.

Depending upon the mood you want to set in the viewer’s mind when he sees your ad, you can experiment with music genres and tracks.

You can also experiment with Voiceovers to see whether your target audience performs an action on a video with a voiceover or without. Since most users watch video ads on their phones and they’re set to mute by default, your mobile video ads must, as a general rule of thumb, have text along with the voiceover.

Here are some tips that’ll help you pick the perfect track for your videos.

On Facebook, YouTube and Instagram, the video thumbnail that you’ve selected for your video ad influences its views and click through rates.

If the thumbnail is relevant to your ad and your target audience connects with it, the actions performed after viewing your video will be quite high. For ex, if you publish a video ad for camping equipment but with the image of a beach as a thumbnail, your ad will perform very poorly.

[Tweet “Why aren’t brands A/B testing videos? Video has a reputation for being expensive and time-consuming.”]

It seems a lot more difficult to create multiple variations of an online video ad for testing than it does to create a few different static images, or change the CTA on a pop-up. Honestly, that is not true. Running A/B tests for digital video ads is quite easy to learn and implement.

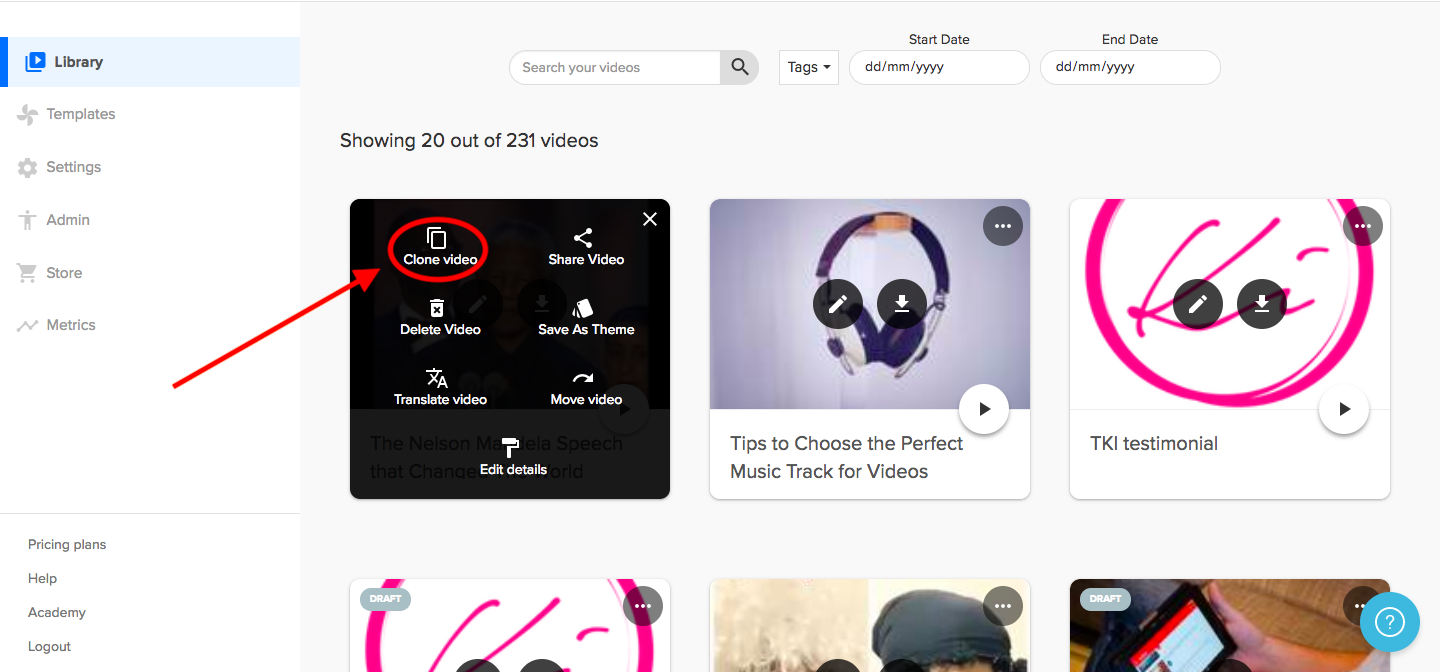

Rocketium lets you clone and edit your videos. This feature is very handy when you’re running A/B tests for your video ads.

Cloning a video from Rocketium dashboard

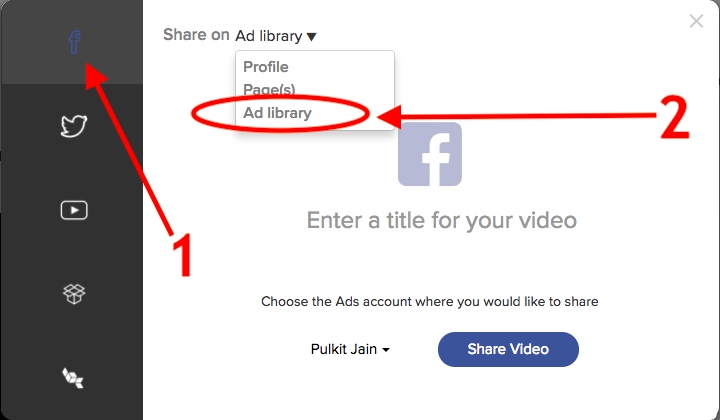

The clones of your videos can individually be uploaded to Facebook Ad Library directly using the Share feature.

Sharing your video ad to Facebook’s ad library

You can then use the metrics provided by Facebook to test which video copy received better click through rates. This can then be used as a standard for all the online video ads your brand will run in the future.